Joint-Task Regularization for Partially Labeled Multi-Task Learning

(IEEE Conference on Computer Vision and Pattern Recognition (CVPR)), 2024.

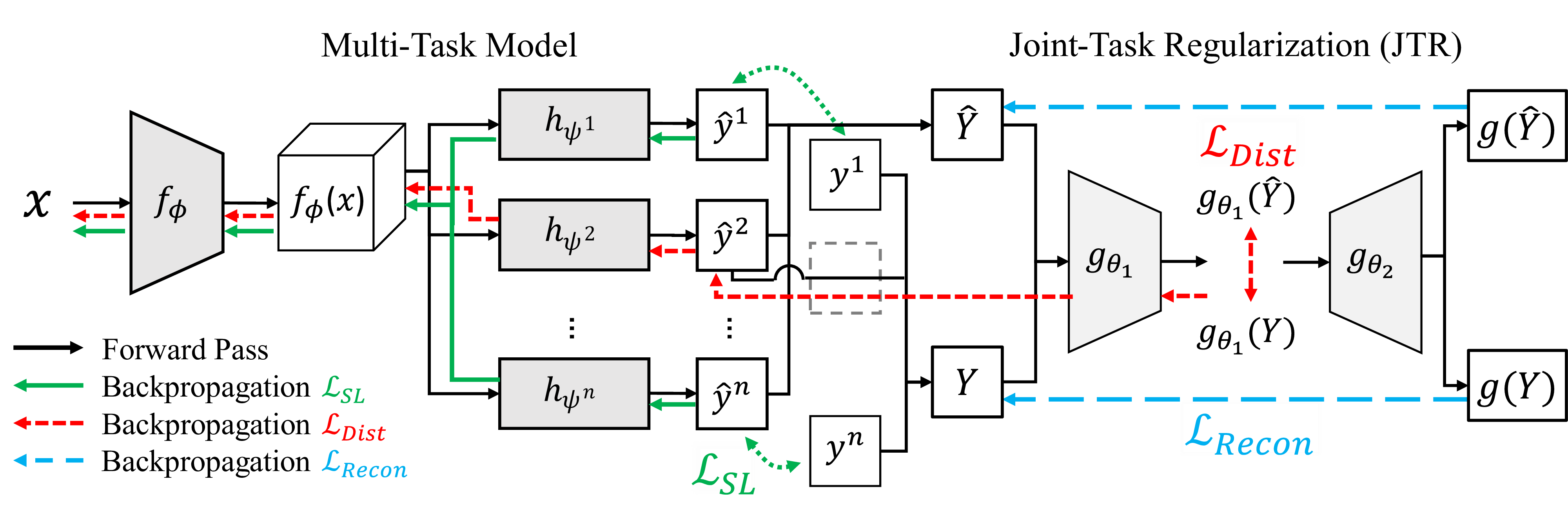

Multi-task learning has become increasingly popular in the machine learning field, but its practicality is hindered by the need for large, labeled datasets. Most multi-task learning methods depend on fully labeled datasets wherein each input example is accompanied by ground-truth labels for all target tasks. Unfortunately, curating such datasets can be prohibitively expensive and impractical, especially for dense prediction tasks which require per-pixel labels for each image. With this in mind, we propose Joint-Task Regularization (JTR), an intuitive technique which leverages cross-task relations to simultaneously regularize all tasks in a single joint-task latent space to improve learning when data is not fully labeled for all tasks. JTR stands out from existing approaches in that it regularizes all tasks jointly rather than separately in pairs -- therefore, it achieves linear complexity relative to the number of tasks while previous methods scale quadratically. To demonstrate the validity of our approach, we extensively benchmark our method across a wide variety of partially labeled scenarios based on NYU-v2, Cityscapes, and Taskonomy.

Acknowledgements

We thank all affiliates of the Harvard Visual Computing Group for their valuable feedback. This work was supported by NIH grant R01HD104969.