Segmentation Fusion for Connectomics

IEEE: Proceedings of the IEEE International Conference on Computer Vision (ICCV), 2011.

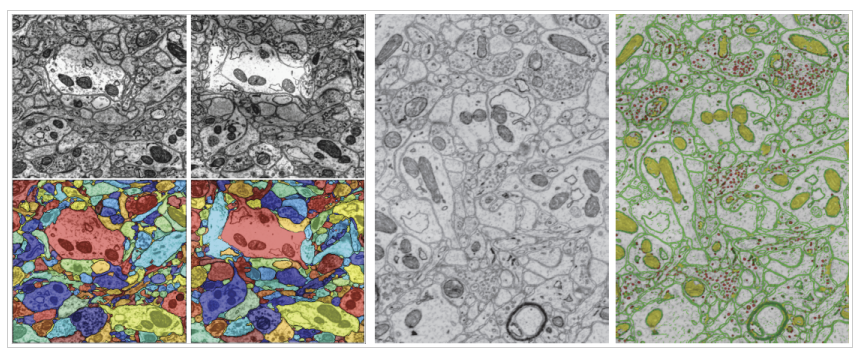

We address the problem of automatic 3D segmentation of a stack of electron microscopy sections of brain tissue. Unlike previous efforts, where the reconstruction is usually done on a section-to-section basis, or by the agglomerative clustering of 2D segments, we leverage information from the entire volume to obtain a globally optimal 3D segmentation. To do this, we formulate the segmentation as the solution to a fusion problem. We first enumerate multiple possible 2D segmentations for each section in the stack, and a set of 3D links that may connect segments across consecutive sections. We then identify the fusion of segments and links that provide the most globally consistent segmentation of the stack. We show that this two-step approach of pre-enumeration and posterior fusion yields significant advantages and provides state-of-the-art reconstruction results. Finally, as part of this method, we also introduce a robust rotationally-invariant set of features for multi-class pixel labeling that we use to learn and enumerate the above 2D segmentations. Our features outperform previous connectomic-specific descriptors without relying on a large set of heuristics or manually designed filter banks.