Interactive and Visual Prompt Engineering for Ad-hoc Task Adaptation with Large Language Models

IEEE Transactions on Visualization and Computer Graphics, 2023.

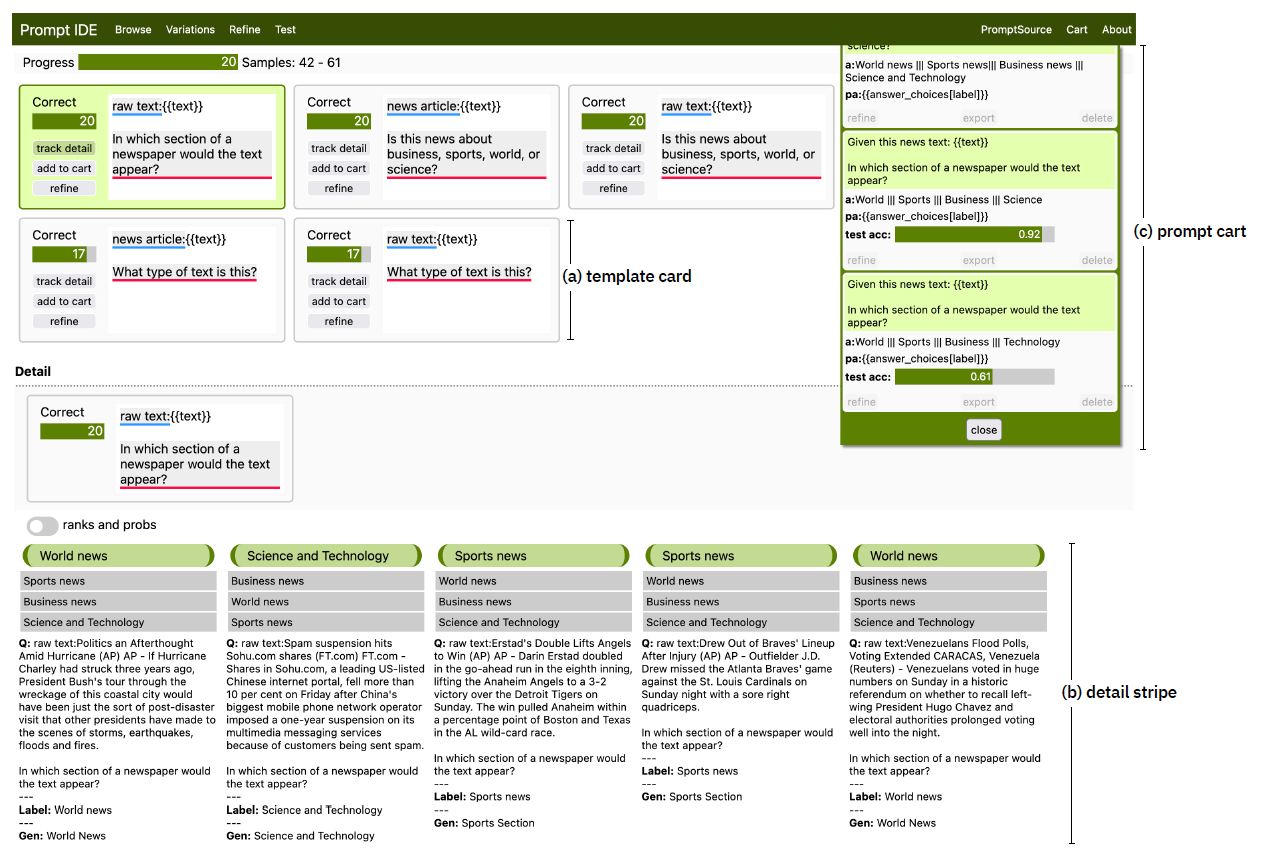

State-of-the-art neural language models can now be used to solve ad-hoc language tasks through zero-shot prompting without the need for supervised training. This approach has gained popularity in recent years, and researchers have demonstrated prompts that achieve strong accuracy on specific NLP tasks. However, finding a prompt for new tasks requires experimentation. Different prompt templates with different wording choices lead to significant accuracy differences. PromptIDE allows users to experiment with prompt variations, visualize prompt performance, and iteratively optimize prompts. We developed a workflow that allows users to first focus on model feedback using small data before moving on to a large data regime that allows empirical grounding of promising prompts using quantitative measures of the task. The tool then allows easy deployment of the newly created ad-hoc models. We demonstrate the utility of PromptIDE (demo: http://prompt.vizhub.ai) and our workflow using several real-world use cases.

Acknowledgements

This project was partially supported by NSF grant IIS-1901030.