Three approaches to facilitate invariant neurons and generalization to out-of-distribution orientations and illuminations

Neural Networks, 2022.

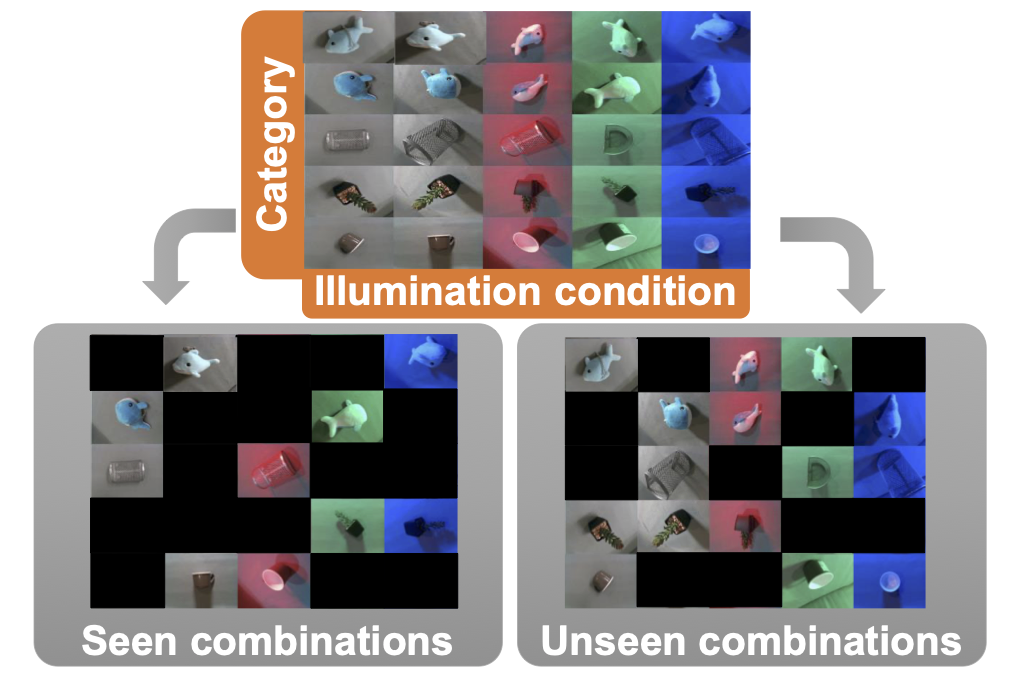

The training data distribution is often biased towards objects in certain orientations and illumination conditions. While humans have a remarkable capability of recognizing objects in out-of-distribution (OoD) orientations and illuminations, Deep Neural Networks (DNNs) severely suffer in this case, even when large amounts of training examples are available. Neurons that are invariant to orientations and illuminations have been proposed as a neural mechanism that could facilitate OoD generalization, but it is unclear how to encourage the emergence of such invariant neurons. In this paper, we investigate three different approaches that lead to the emergence of invariant neurons and substantially improve DNNs in recognizing objects in OoD orientations and illuminations. Namely, these approaches are (i) training much longer after convergence of the in-distribution (InD) validation accuracy, i.e., late-stopping, (ii) tuning the momentum parameter of the batch normalization layers, and (iii) enforcing invariance of the neural activity in an intermediate layer to orientation and illumination conditions. Each of these approaches substantially improves the DNN’s OoD accuracy (more than 20% in some cases). We report results in four datasets: two datasets are modified from the MNIST and iLab datasets, and the other two are novel (one of 3D rendered cars and another of objects taken from various controlled orientations and illumination conditions). These datasets allow to study the effects of different amounts of bias and are challenging as DNNs perform poorly in OoD conditions. Finally, we demonstrate that even though the three approaches focus on different aspects of DNNs, they all tend to lead to the same underlying neural mechanism to enable OoD accuracy gains — individual neurons in the intermediate layers become invariant to OoD orientations and illuminations. We anticipate this study to be a basis for further improvement of deep neural networks’ OoD generalization performance, which is highly demanded to achieve safe and fair AI applications.

Acknowledgements

We are grateful to Tomaso Poggio and Hisanao Akima for their insightful advice and warm encouragement. We thank Shinichi Matsumoto and Shioe Kuramochi for their assistance to create CarsCG and DAISO-10 datasets, respectively. This work was supported by Fujitsu Limited (Contract No. 40008819 and 40009105) and by the Center for Brains, Minds and Machines funded by NSF (National Science Foundation) STC (Science and Technology Centers) award CCF-1231216. PS and XB are partially supported by the R01EY020517 grant from the National Eye Institute (NIH), and SM and HP are partially funded by NSF grant IIS-1901030.